Summarizing Tweets in a Disaster (part II)

Its May 2015, and rescue teams are working to rebuild Nepal following the April earthquake (and its aftershocks). Can these efforts be helped by twitter?

In the previous post, I built a tweet summarizer, which took all of the tweets tweeted between April 25th and May 28th and selected a short summary of tweets which would be most useful to rescue teams:

Summarizing Tweets in a Disaster (part I)

Now, I want to go one step further: rather than just selecting the best tweets, can I generate my own sentences which effectively summarize all of the useful information in the tweets?

Note: this post is essentially an implementation of this paper; its definitely worth reading if the subject interests you!

Contents:

- Making a word graph: I’ve already generated a summary of the best tweets, using content word tweet summarization (in the previous post). I am going to use these tweets, and consider all the possible word paths they contain (using a word graph).

- Investigating Informativeness and Linguistic Quality: I will then select the best word paths, by defining some new metrics: informativeness, and linguistic quality.

- Generating an Abstractive Summary: Finally, I’ll use these best word paths to generate an abstractive summary of the tweets.

I do this exercise twice, using both spaCy and NLTKas tokenizers (and using their respective selections of tweets from the content word tweet summarization).

Link to notebooks for spaCy and NLTK.

1. Making a word graph

To generate my own sentences, I am going to take the best tweets, and consider all of the possible word paths contained within them.

What exactly does this mean?

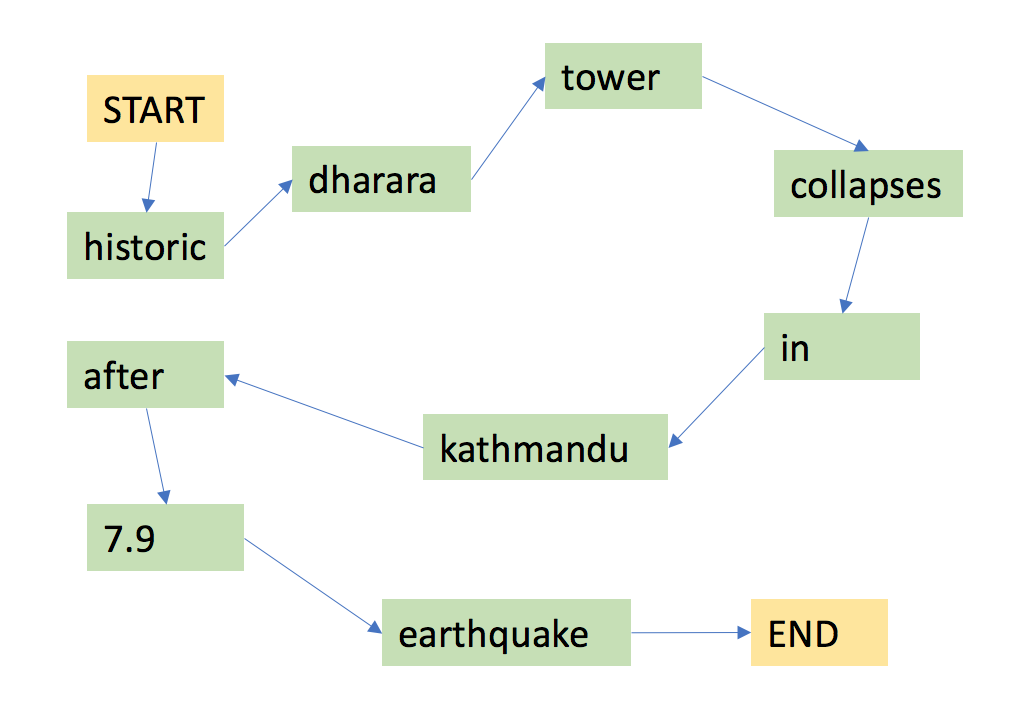

To understand what a word path is, I am first going to consider a word graph. A word graph is a graphical representation of a sentence, with nodes representing words and edges representing connections between words. For instance, the sentence “historic dharara tower collapses in kathmandu after 7.9 earthquake” could be represented with the following word graph:

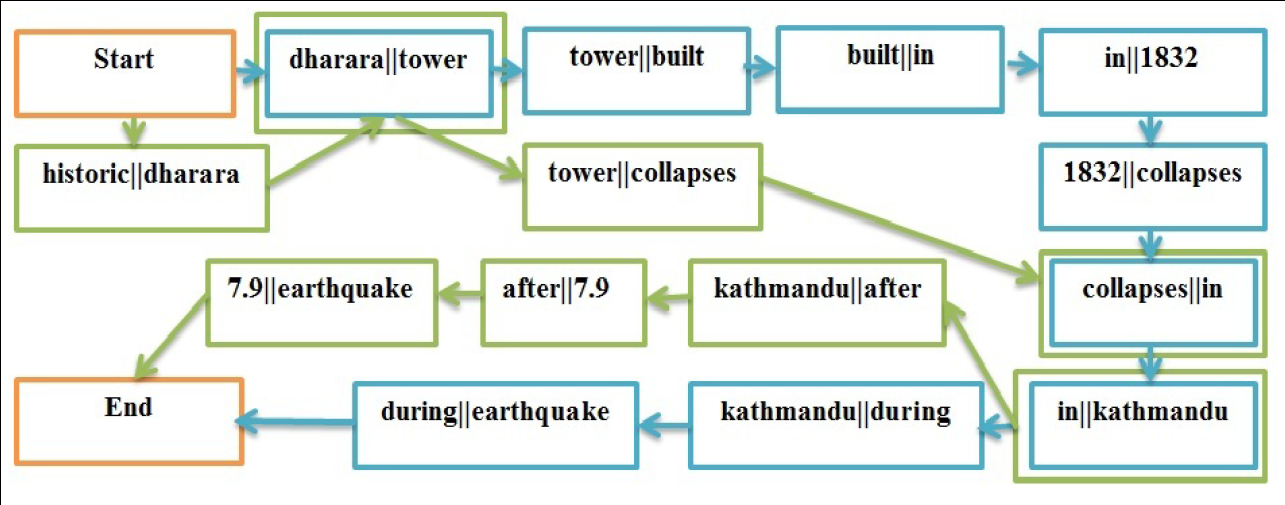

Word graphs become powerful when multiple sentences are added. For instance, lets say I add another sentence: “dharara tower built in 1832 collapses in kathmandu during earthquake”: (credit to Rudra et al for this figure. Bigrams rather than words are used at the nodes, but the principle is otherwise the same.)

Now, I can find word paths through this graph which are different from the original input sentences, for instance “dharara tower built in 1832 collapses in kathmandu after 7.9 earthquake”. I’ve been able to extract a some information from both input sentences, to make my own.

The goal of abstractive summarization is essentially to do this: generate my own word paths so isolate the best information from tweets.

Taking my own tweets, I can generate a word graph from them using bigrams which I can then use to generate all of the possible worth paths. Many of the word paths generate non sensical sentences, such as

[Nepal quake - Buildings collapse in Delhi for queries regarding tragic Earthquake Rocks Nepal victims']

so I am hoping to get useful word paths, which also make sense.

2. Informativeness and Linguistic Quality

To get useful word paths which make sense, there are two metrics I will measure: informativeness, and linguistic quality.

2.1. Informativeness

Informativeness really means how representative the tweet is of the corpus of tweets I have. Luckily, there is an easy way to quantify that. When I was selecting tweets for the summarization in the last post, I generated something called a tf-idf term matrix. This is a matrix, containing all the vocabulary for all the tweets:

Each column represented a word. If a tweet in row [i] contains the word represented by column [j], then matrix element

[i][j] will contain that word’s tf-idf score. Otherwise, it will contain a 0.

I can therefore generate a vector which approximately represents the body of tweets, by finding the average of each column:

Where the above image represents an array of height 1 with a length equal to the total vocabulary size of all the tweets, populated by the column mean of each value for all the tweets.

I can generate a similar row for each word path, and compare the difference between this average-vector and the word path vector. I do this finding their cosine similarity, which measures the cosine angle between the two vectors (smaller is more similar).

2.2. Linguistic Quality

Since many of the word paths are nonsensical, it is important to pick the grammatically correct sentences which make sense. How can I do this?

One way is to look at how likely it is that sequences of words in the word paths I have chosen would occur in ‘correct English’. I can do this by analyzing a text of ‘correct English’, and measuring how frequently different word sequences occur. I can then compare this to my word paths; if a word path is full of frequently occurring word sequences, it is more likely to be linguistically correct, so I will score it higher.

Word sequences of length n are known as n-grams, so this approach to linguistic quality is known as an n-gram linguistic model.

\begin{equation} P(w_{i} | w_{i-1}, w_{i-2}) \end{equation}

A trigram (an n-gram when n = 3) calculates the above equation: what is the probability that the next word in the word path is the word that it is, considering the two words that came before it, where the probability is compared to a corpus of English.

Luckily, there is a toolkit called kenlm which has been designed to do exactly this (and here is a great post which goes into more detail on how linguistic quality is extracted from n-grams).

Using kenlm, I used the Corpus of Contemporary American English (COCA) as my ‘correct English’ reference, and scored the linguistic quality of the word paths.

3. Generating an Abstractive Summary

Just like in part 1, I used these components to generate an Integer Linear Problem, which could be solved. Considering all the word paths, I wanted to select the word paths with the highest informativeness scores and the highest linguistic quality, which also contain the most content words.

Mathematically, I can write this down as

\begin{equation} \sum_{i=1}^{n} LQ(i) \cdot I(i) \cdot x_{i} + \sum_{j=1}^{m} y_{m} \end{equation}

where LQ is the linguistic quality and I is the informativeness of the ith word path, subject to the constraints that:

- My total summary must be less than 150 words

- If a content word is selected, a word path which contains that content word must also be selected

- If a word path is selected, all content words in that word path must be selected.

I can also describe this mathematically as

\begin{equation} \sum_{i=1}^{n}x_{i} \cdot Length(i) \leq L \end{equation}

\begin{equation} \sum_{i\in T_{j}}x_{i} \geq y_{j}, j=[1, …, m] \end{equation}

\begin{equation} \sum_{j \in C_{i}} y_{j} \leq |C_{i}| \times x_{i}, i=[1, …, n] \end{equation}

I then used pymathprog, and integer programming module, to solve these equations (by maximizing the first equation, subject to the constraints of the second three equations).

This yielded the following results for spaCy:

spaCY:

--------------

valanche sweeps everest base camp , 34 minute of major earthquake

--------------

: mea control room no for nepal 25/04/2015 07:13 utc , april 25,nepalquake kathmanduquake

--------------

high alert after 7.9 magnitude earthquake perso _thquake

--------------

earthquake m7.5 strike 89 km nw of 4.5 + 91 11 2301 7905

--------------

thr r safe . apr 25 14:14 at 7.7 richter scale , via

--------------

sad day for the last 1 hour(s ) . associatedpress associated press news

--------------

: whole himalayan region be up and lalitpur make kathmandu 's 19th century nine - witness

--------------

: 09771 4261945/ 4261790 emergency helpline number in 80 year - typical indian

--------------

9779851135141 square afganistan bhutan emb

--------------

building collapse , 400 people kill in kathmandu-+977 98511 07021 , 9851135141

--------------

nepal n north east . kathmandu contact mr. adhikari 00977 - cnn

and for NLTK:

NLTK:

--------------

: LATEST Nepal's Kantipur TV shows at Ahmedabad from Kathmandu RestlessEarth GeographyNow

--------------

MEA opens 24hr Control Room in Nepal 20 00 29 UTC quake

--------------

: EarthquakeInNepal Helpline Numbers of Lamjung , Purvanchal & Kochi too !

--------------

: Warning India Bangladesh Pakistan Afganistan Bhutan Nepal Earthquake Rocks Nepal BBC

--------------

Dharahara also called Bhimsen Tower , 2 at 09:30 UTC , 9851135141

--------------

( Houston _0998 ) Avalanche Sweeps Everest in Nepal - New York Times

--------------

Kathmandu's Darbar Square after 7.9 magnitude in Tibet Nepalquake Embedded image permalink

--------------

5.00 earthquake Kathmandu Ambulance and 11 2301 2113 011 2301 4104 011 2301 7905

--------------

Update on 4/25 / 2015 06:37 UTC , Katmandu - Fox News

--------------

: 09771 4261945 / 15 @ 9:30 : Nepal AssociatedPress Associated Press news

--------------

Bravo Google You are faster than 300 people within 100km . 9 - France 24

--------------

: Patan Durbar Square after 7.7 quake : 079-2325190 0/902 / 9779851135 141

These are not great, and the tweet summarization is definitely a better method way of capturing the best useful tweets than abstractive summarization. Some of the word paths do make sense, but the vast majority do not.

4. Conclusion

This was an interesting incursion into Natural Language Processing. In particular, it was awesome to see how data science can be applied outside of machine learning and neural networks, and to use another optimization method (ILP).

It was also awesome to explore different NLP techniques, such as word graphs and n-grams.

I suspect the reason this method failed is because the tweets selected are too different; the word graph is excellent at taking similar tweets, and then finding a word path which extracts only the useful information from them. Since I started with the tweets selected by COWTS, the tweets were already a summarization.

If I tried again, I would use a broader range of tweets, to try and take advantage of this.